Cracking the Code...

12/16/2019

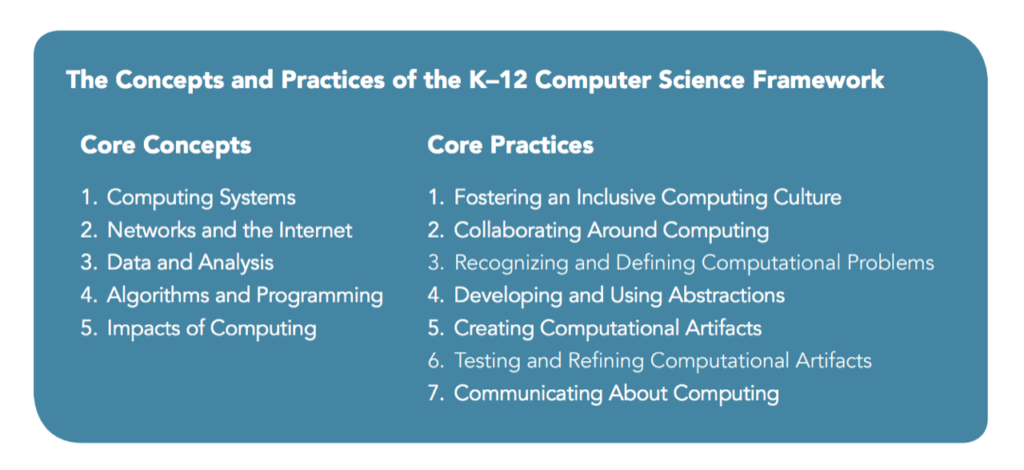

Though we often think of smart phones and laptops when we hear the word “technology,” that term is more broadly defined than that. In fact, technology is any application scientific knowledge for practical purposes. It includes machines, but it also includes techniques and processes; early human technology included the wheel and the hammer. In a school context, slates and chalk gave way to pencils, which became pen and ink. Throughout human history, technological advances have ushered in new eras, new industries, and both negative and positive impacts. When I was attending college to prepare for my teaching career, I was required to take a class on “instructional technology.” In this class, I learned how to effectively use tools such as an overhead projector, an opaque projector, and a 16 mm film projector to enhance my instruction. Then, as a new teacher, I learned how to use the technology of the mimeograph machine to create my own handouts and parent newsletters, and I spent many an evening removing purple mimeograph ink from my hands. In fact, if you are older (like me), you might find this image humorous and familiar at the same time: Throughout the ensuing years, new technologies constantly changed the way I taught and the things I asked my students to do. Overhead projectors became interactive whiteboards; typewriters were replaced with word processors; tape recorders became CDs and then MP3 players; TV shows evolved into video tapes, then DVDs, then video streamed on demand. In the last year or so, my use of the whiteboard and marker has reduced significantly as I learned to use my iPad and Apple Pencil. Every few years the technology changed, and there were new things to learn and new ways to enhance the educational experience. The biggest change of all was the introduction of personal computers. Computers have impacted our world in revolutionary ways. Computers can represent our physical reality as a virtual world, and they can follow instructions to manipulate that world. Ideas, images, and information are translated into bits of data and processed in infinitely creative ways. Computers are more than just a tool: they are a medium for creative personal expression and critical problem solving. As computers became more and more ubiquitous and user friendly, I enjoyed the challenge of helping students of all ages understand how to use them effectively and responsibly. We created music and video, made our own slide shows, created art and spreadsheets. And yet, there was a missing component to my curriculum: actual computer science. Computer science is the field of understanding why computers work and how to create those technologies., as well as the related rights, responsibilities, and applications. Instead of being passive consumers of computing technologies, students also needed to learn to be active producers and creators. Why? Well, learning to code is not just for coders. Computer science has driven innovation in every field, and is powering approaches to many of our world’s toughest challenges. Computer science in this century opens more doors than any other discipline. Learning the basics will help students in any career—from architecture to zoology. 71% of all US jobs require digital skills. And high-skilled computing occupations are the fastest-growing, and now the largest sector, of all new wages in the US. Just as they learn how to write an essay or how electricity works, it’s important for every 21st century student to have a chance to design an app and learn how the internet works. These are critical literacies in their world and the key to solving problems and creating things we can't even imagine. How exciting it is to help them crack the code of the future! Comments are closed.

|

Elizabeth FerryMs. Ferry's experiences include teaching with the Peace Corps in Tanzania, teaching high school English in Maine, and this is her second year at PNA. She loves moose, outdoor activities, and being with her students. Archives

April 2021

Categories |

RSS Feed

RSS Feed